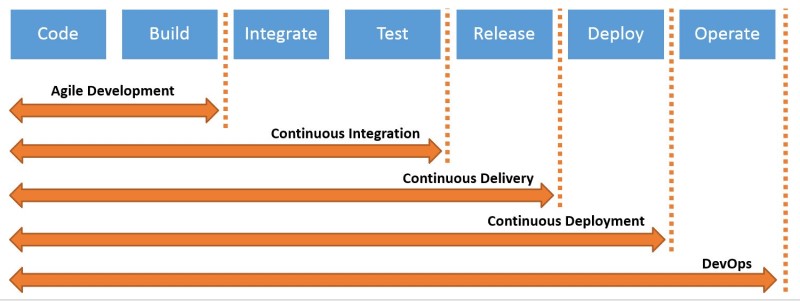

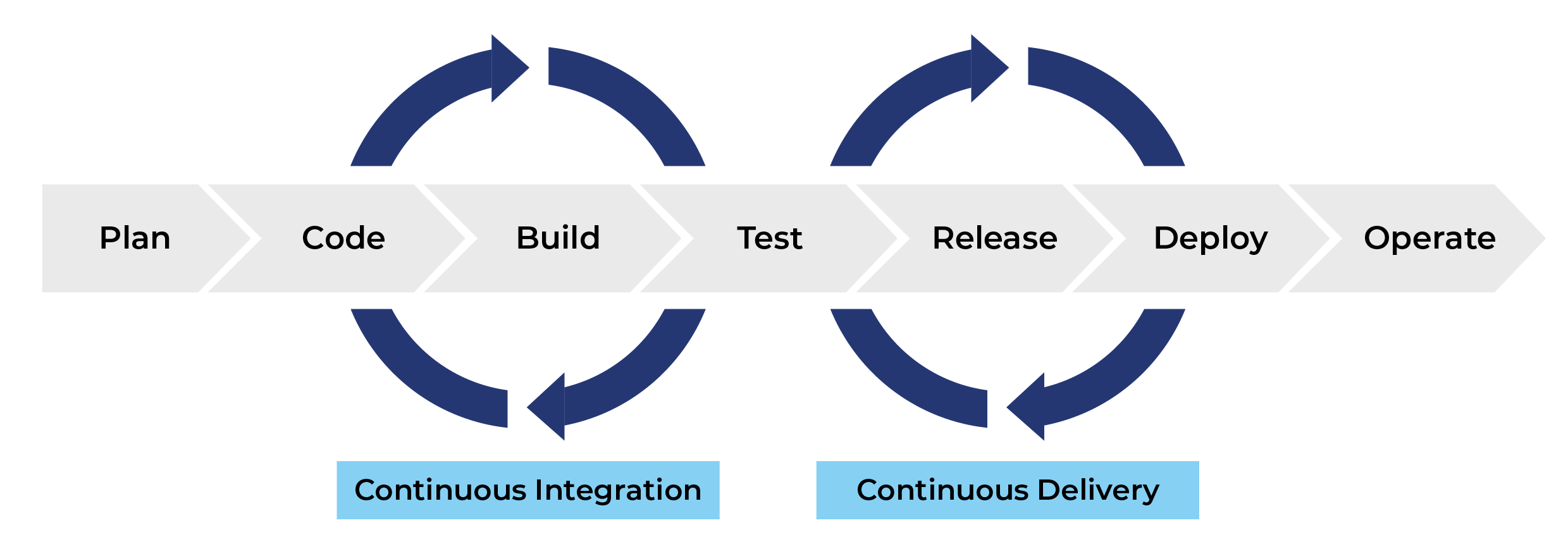

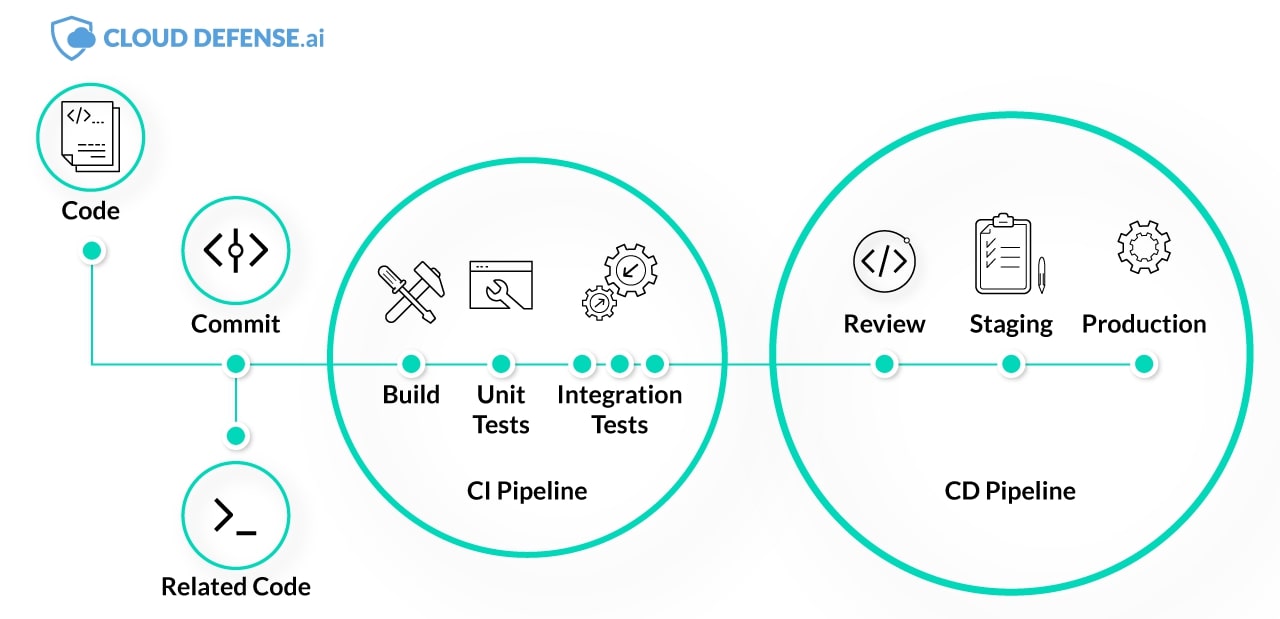

Central to the practice of DevOps is the twin processes of Continuous Integration and Continuous Delivery (CI/CD).

Understanding how a CI/CD pipeline works is the fundamental step to adopting and implement an effective CI/CD pipeline framework that enables an organization to release its software products faster, and in a streamlined manner that produces fewer defects.

However, an organization cannot blindly follow the technical aspects of a CI/CD pipeline and expect it to yield the desired results. It is also important that its technical team understand how the application of the agile methodology and DevOps practices contributes to faster delivery cycles.

Therefore, a successful CI/CD implementation requires a comprehension and appreciation of the cultural change needed to support an effective CI/CD pipeline.

Continuous Integration vs Continuous Delivery vs Continuous Deployment

Behind every successful DevOps practice are at least two of these pillars of implementation; namely Continuous Integration, Continuous Delivery, Continuous Deployment.

Continuous Integration (CI)

The purpose of CI is to provide a consistent and automated way in which to build, test, and package applications. A consistent CI process maximizes the likelihood of committing code changes more frequently by teams, thereby boosting software quality and collaboration.

Instead of allowing their code to reside in the private branches of their computers, only to integrate it at the end of the release cycle, CI ensures that developers merge their code changes into the shared repository of the main trunk frequently; perhaps several times a day.

There are several advantages to this process, one of which is that it allows everyone in the development team to be working on the latest code, instead of operating on outdated assumptions.

Another benefit is that errors and integration bugs are detected much earlier, and as a result, are rectified much more quickly. This ultimately reduces the cost of maintenance and fixing bugs later on in the process.

In CI, a new build is triggered whenever code changes are merged into the shared repository. These processes are automated, ensuring that it functions frictionless with limited manual intervention.

Tests, most notably integration, but also unit tests are executed against these builds to ensure there isn’t any breakage in the system, and that they are performing optimally.

Automated tests aren’t required, but they are a best practice incorporated into the CI pipeline that ensures a measure of quality control is maintained.

Continuous Delivery (CD)

CD comes after CI, and it ensures that the code changes such as features, bug fixes, and configuration changes are prepared for release to the production system in a quick, yet safe and sustainable manner.

Like CI, the Continuous Delivery pipeline achieves its purpose primarily through automation, making the entire process less error prone.

Running automated tests on each code build during the CD pipeline provides assurance to developers and other stakeholders that the code being checked-in has a certain of baseline quality, and will function as expected.

Another purpose of CD is to reduce the time taken to deploy code into production. It ensures that at any point in time, an organization has a built, tested, and deployment-ready software artifact that has been adequately validated by a process of safety checks.

Continuous Deployment

Continuous Deployment is similar to Continuous Delivery in all respects except one – it represents a step up from Continuous Delivery in that every code change is now automatically deployed to the production system without waiting for the explicit approval of the developer.

In other words, no manual intervention required.

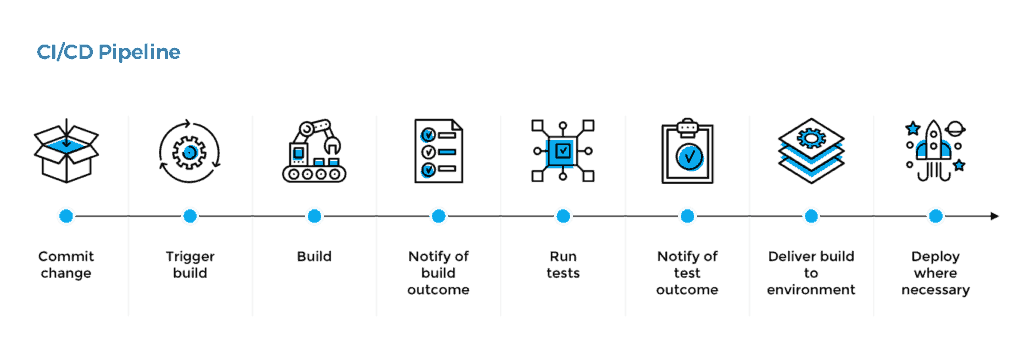

What is CI/CD Pipeline

In a nutshell, the CI/CD is an automated pipeline for the software delivery process. A CI/CD pipeline is one of the best modern practices that has evolved to enable a DevOps team deliver quality software in a sustainable manner.

It bridges the gap between IT operations and software development through a myriad of activities such as testing, integration, deployment, and automating the software delivery process.

The CI/CD pipeline has become imperative if an organization desires to ship bug-free code at high-velocity. In addition to eliminating manual errors due to automation, it provides a standardized feedback loop to the DevOps that produces faster iterations.

The activities of a CI/CD pipeline can be summarized in these four stages:

Source Stage

This is the starting point of the pipeline, triggered by a change in, or creation of the source code repository, like a git push command.

Build Stage

This occurs when a source code and its associated dependencies are compiled to build a runnable instance of the application product. Interpreted languages such as Python, PHP, and JavaScript don’t require this step.

No matter what language, cloud-native software is usually deployed as containers either with Kubernetes or Docker

Command compile —> docker bui

Test Stage

This is one of the most important aspects of the CI/CD pipeline; using automated tests to validate the code’s correctness and viability.

It isn’t unusual for many large scale projects to run tests in several stages, ranging from smoke tests, to end-to-end integration tests; smoke —> unit —> integration.

Deploy Stage

After the built runnable instance has passed all its tests, then the next phase in the pipeline is deployment. Teams often have multiple deployment environments, such as alpha, beta (for the internal product team and a few select users), and eventually, the production for the end-users; staging —> QA —> production.

Benefits of CI/CD Pipeline

Before the development of DevOps, software developers and systems administrators were segregated in their own silos, and hardly worked together.

their own silos, and hardly worked together.

Later, developers would have to manually integrate their code with the rest of the team when they were done.

They usually merged their code infrequently; in-between several days, or even weeks, depending on when the next build was scheduled.

The problem with this approach was the significant time lapse before a developer could realize whether the introduction of the new code from other team members had broken existing functionality.

But the Internet and the changing expectations of customers for rapidly delivered products compelled organizations to seek ways to release a constant flow of updates and features to quickly meet the challenges of evolving, competitive markets.

These improvements led to the following benefits of incorporating a CI/CD pipeline:

Increasing Productivity

Continuous Integration champions the increase in frequency with which developers merge their local changes with the main branch.

This has several benefits such as improving developers’ collaboration and efficiency; and boosting an organization’s time-to-market.

Production of More Dependable Releases

The frequent and small updates of software deployments are less risky. With a smaller deployment footprint, it is easier to locate where a bug or error was introduced into the system simply by identifying the last deployment that caused the problem.

Faster Fixing of Bugs and Patching of Problems

Because bugs are faster to find, the root cause of their problem is also easier to identify and later fix.

Mitigate the Risk of Failure

Because of in-built automated testing in the CI/CD pipeline, the risk, and magnitude of failure is drastically reduced.

Hence, developers are emboldened to innovate and take chances due to the presence of fail safe and fail smart conditions like the presence of an early war system of failure in the form of testing, the ease of locating and resolving issues, along with the ability to restore the last good system state with version control repositories.

Prioritizing Deployments

CI/CD pipeline increases the speed of operations through prioritizing what features and bug fixes are ready to be deployed.

Release on Demand

Continuous Delivery makes it possible to have software that is always in a releasable state. This increases an organization’s speed of delivery, and ability to nimbly address changing market needs.

Shortens the Release Cycle

Smaller, quicker, and more frequent integrations and delivery results in shorter release cycles. This enables a business to gather feedback and data quickly, allowing them to capitalize on their market responsiveness to innovate faster to improve customer experiences.

Frictionless Deployment Operations

Effective CI/CD pipelines are a relatively simple-to-follow process because the complexity has been mitigated by automation.

How to Implement an Effective CI/CD Pipeline: CI/CD Implementation Stages

A CI/CD pipeline provides an efficient way to ship small increments and iterations of code. Most CI/CD implementation stages overlap, especially the process of creating the software build, which spans version control, QA checks, and compilation.

Using a CI/CD solution that has in-built automation drastically simplifies the process.

What is depicted here is a generic implementation, which might differ from others depending on the tools or process emphasis each DevOps prefers.

Plan

Propose a feature that needs to be built, or outline an issue that warrants change.

Code

Turn use case suggestions and flowcharts into code, peer-review code changes, and obtain design feedback.

Test

Verify code changes through testing, preferably automated testing. At this point, unit testing will usually suffice.

Repository

Push code to a shared repository like Github that uses version control software to keep track of changes made. Some consider this as the first phase of the CI/CD pipeline.

Set up an Integration Testing Service

For example, Travis CI to continuously run automated tests, such as regression or integration tests, on software hosted on services like Github and BitBucket.

Set up a Service To Test Code Quality

Better Code Hub can help continuously check code for quality in CI/CD pipeline. This will enable the software development team to spend less time fixing bugs. Better Code Hub uses 10 guidelines to gauge quality and maintainability in order to future proof the code.

Build

This might overlap with compilation and containerization.![]()

Assuming the code is written in a programming language like Java, it’ll need to be compiled before execution. Therefore, from the version control repository, it’ll go to the build phase where it is compiled.

The build phase involves acquiring the code from all the branches of the repository, merging them, then compiling them with an adequate compiler.

Use containerization services to create an image for the application. Containers are loosely isolated environments. Containers allow a developer to package an application with all the resources it needs, such as libraries, configuration files, and dependencies.

The benefit of containers is that the security and isolation it provides allows DevOps to run several containers simultaneously on a particular host. Docker makes it easier to create, deploy, and run your applications by using containers.

Some pipelines prefer to use Kubernetes for their containerization.

Testing Phase

Build verification tests as early as possible at the beginning of the pipeline. If something fails, you receive an automated message to inform you the build failed, allowing the DevOps to check the continuous integration logs for clues.

Smoke testing might be done at this stage so that a badly broken build can be identified and rejected, before further time is wasted installing and testing it.

Push the Container

(Docker) image created to a Docker hub: This makes it easy to share your containers in different platforms or environments or even go back to an earlier version.

Deploy the App, Optionally To a Cloud-Based Platform

The cloud is where most organizations are deploying their applications. Heroku is an example of a relatively cheap cloud platform. Others might prefer Microsoft Azure or Amazon Web Service (AWS).

CI/CD Pipeline Tools

A CI/CD pipeline is meant to facilitate shipping code and functionality in short iterations and small increments. Identifying the best practices for DevOps to be executed successfully is important, but it still requires the proper tooling to accomplish.

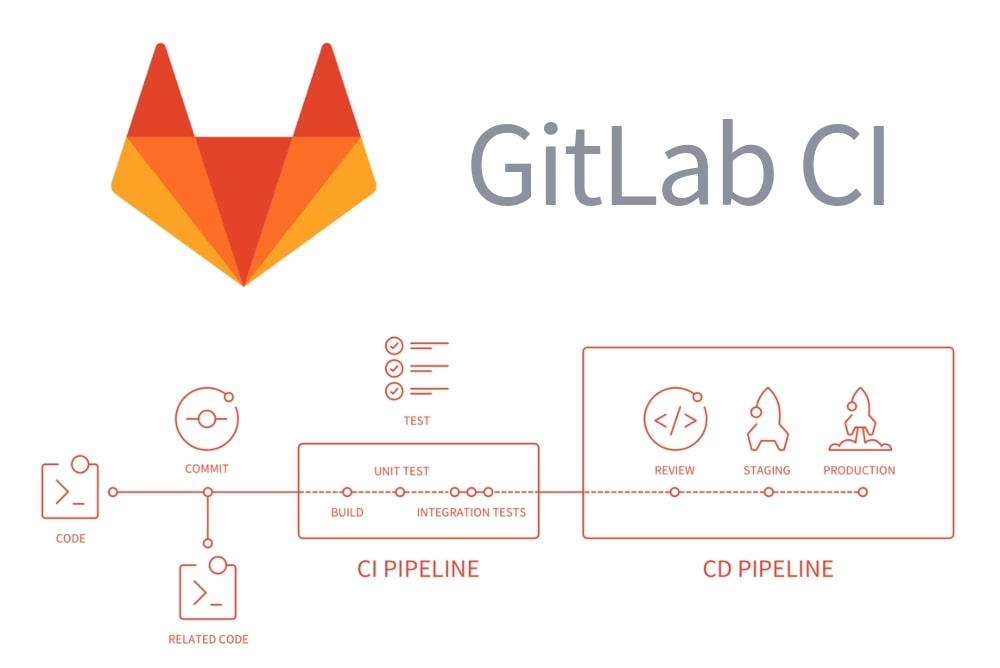

GitLab CI

A relatively new entrant into the fray, GitLab uses YAML file to describe an entire CI pipeline.

It also boasts of functionality such as Auto DevOps that makes it easier for simpler to have a pipeline built automatically, supplying multiple built-in tests in the package.

GitLab comes with native integrations into Kubernetes, which enables you to deploy automatically into Kubernetes cluster. It streamlines its build process with Herokuish buildpacks that helps it determine the language and how to build the application.

Travis CI

Travis CI is a Continuous Integration service that operates as a Software-as-a-Service (SaaS) model. It integrates seamlessly with GitHub, and it also stores the pipeline in YAML. It enables DevOps to build, deploy and test their code with confidence.

Jenkins

Jenkins is the de facto standard in CI/CD pipelines. It is an open-source automation server that DevOps can reliably use to automate part of the pipeline related to facilitating Continuous Integration and Continuous Delivery using servlet containers like Apache Tomcat.

Bamboo

Bamboo automates the software application release management process thereby facilitating a continuous delivery pipeline.

What Makes a Good CI/CD Pipeline

A good CI/CD pipeline provides an organization with a shortened feedback cycle that equips them to ship more application products and features while reducing errors and mistakes, boosting productivity, and increasing customer sentiment as well as providing them with the proper understanding of the app development cycle.

A characteristic of a good CI/CD pipeline is that it incorporates the right automated tests and crucial points in the Software Development Life Cycle (SDLC). Other benefits include the following:

Keeping Deployment Consistent by Using the Same Processes and Artifacts

The sign of a good CI/CD pipeline is that it uses the same processes and artifacts for both Continuous Integration and Continuous Deployment.

The CI portion of the pipeline generates a new build artifact from the source code, then hands it over to the CD process to take over. This artifact from the build can be generated from the source code, or a Docker container.

Irrespective of the build’s origin or format, the same artifact should be used across all environments.

A consistent artifact across all environments permits the CI/CD process to be able to test the code in QA or stage, and subsequently deploy it to production with confidence that it will work because the same artifact was used across board.

Speed

A good CI/CD manifests itself by providing quick feedback on the viability of a build. It will drastically reduce the time required to build, test, deploy, and deploy an app from initial coding to eventually the commit to production.

CI/CD pipelines reduce the Mean Time To Resolution (MTTR) because of smaller integration and deployments.

MTTR, which evaluates how quickly bugs and broken features with issues are repaired, are positively impacted by the CI/CD pipeline. They can also scale quickly to meet burgeoning development demands in real-time.

Conclusion

While longer release cycles are generally more stable because they are usually better tested, they are sub-optimal in terms of cost, and the opportunity lost to meet customers’ needs in time. Effective CI/CD pipelines provide a sustainable way to collapse the cost and timelines of a release cycle.

Implementing an effective CI/CD pipeline levels the playing field, even for small teams with resource constraints through accelerating production releases and increasing productivity by shipping more products while reducing mistakes.

CI/CD pipeline helps minimize errors, which creates a higher degree of confidence in the shipped product.